DURATION

Oct 2024 – Jan 2025

Keywords

Concept Prototyping, Product Design, Motion

Parfait is a startup using AI to bring custom wig shopping and fitting fully online. I led product design for the concept prototype created to anchor the company’s bridge round pitch and show investors how far the product could go.

I led end-to-end product design for a concept prototype that helped the founders secure a $5M bridge round and shape the roadmap that followed. I worked closely with founders Isoken and Ifueko Igbinedion, alongside Joshua Vizzacco, creative director on my team, to shape the concept into a product story that could land with both investors and customers.

The first instinct was to center the story on Parfait’s AI. I pushed in a different direction. A technology-first story can sound impressive, but it rarely lands unless people can immediately understand the problem it solves. The research made that shift clear: customers were not choosing Parfait because they understood the AI. They were choosing it because it solved persistent problems around fit, convenience, time, and confidence. That insight changed the concept. Instead of presenting AI as the headline, I used it as the engine behind a more legible and trustworthy shopping experience.

A big part of the work focused on the scan flow, where trust was breaking down early. In testing, what first looked like a logistical issue turned out to be something more fundamental. Asking users to hold up a credit card during the scan introduced hesitation from the start. People were unsure why it was needed, whether it was safe, and whether they were doing the scan correctly.

The old AI scan prompted users to hold a credit card for accurate measurement. This instruction appeared on the title screen, and the video guide reinforced the behavior by using a card during the steps.

I redesigned the flow around a simpler three-step interaction that removed the card, reduced friction, and gave users clearer feedback as they moved through the scan.

Flow chart of this new condensed AI scan flow. This 3-step flow captures what is needed for the model while removing the need for a physical card during scan.

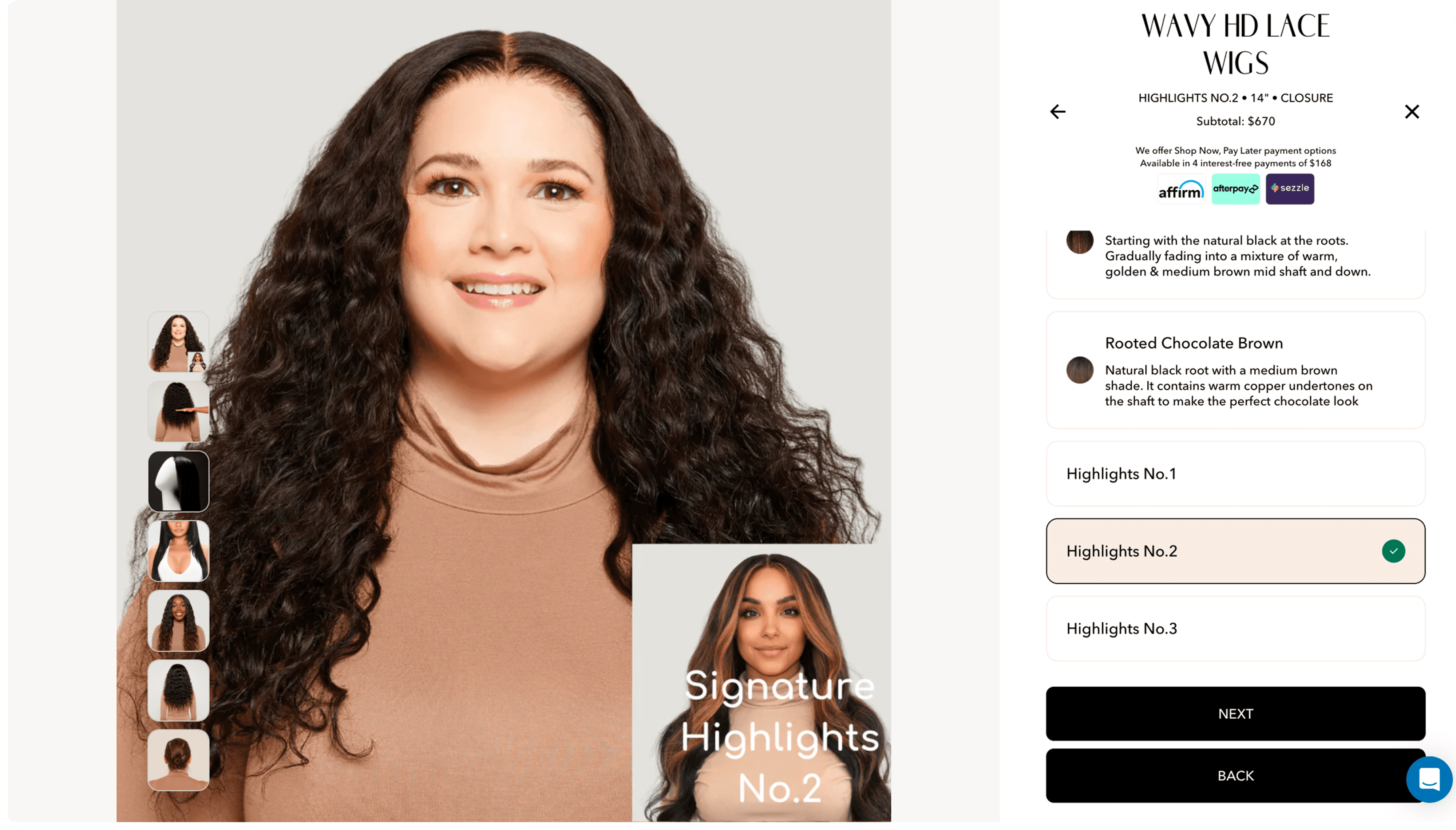

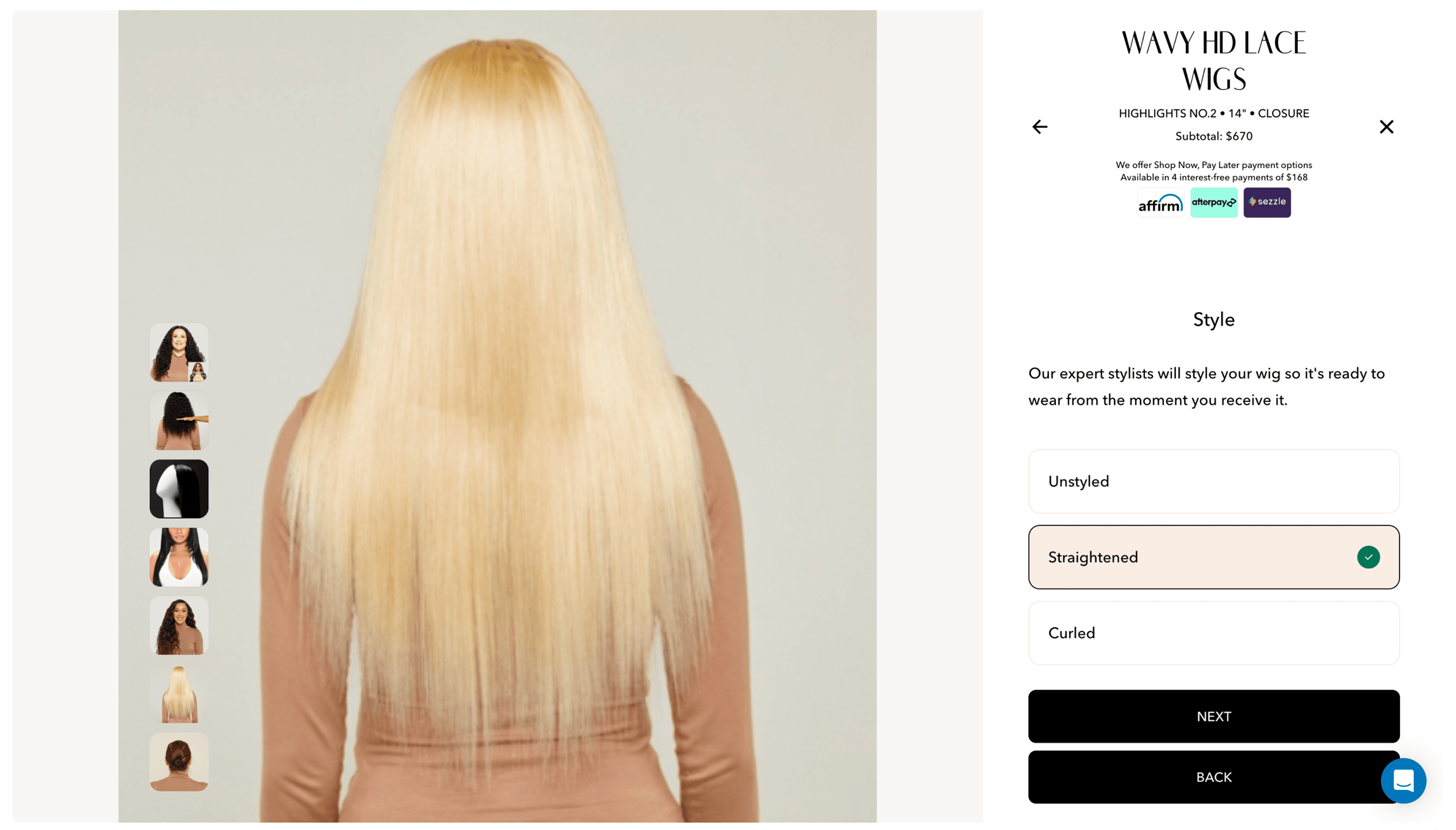

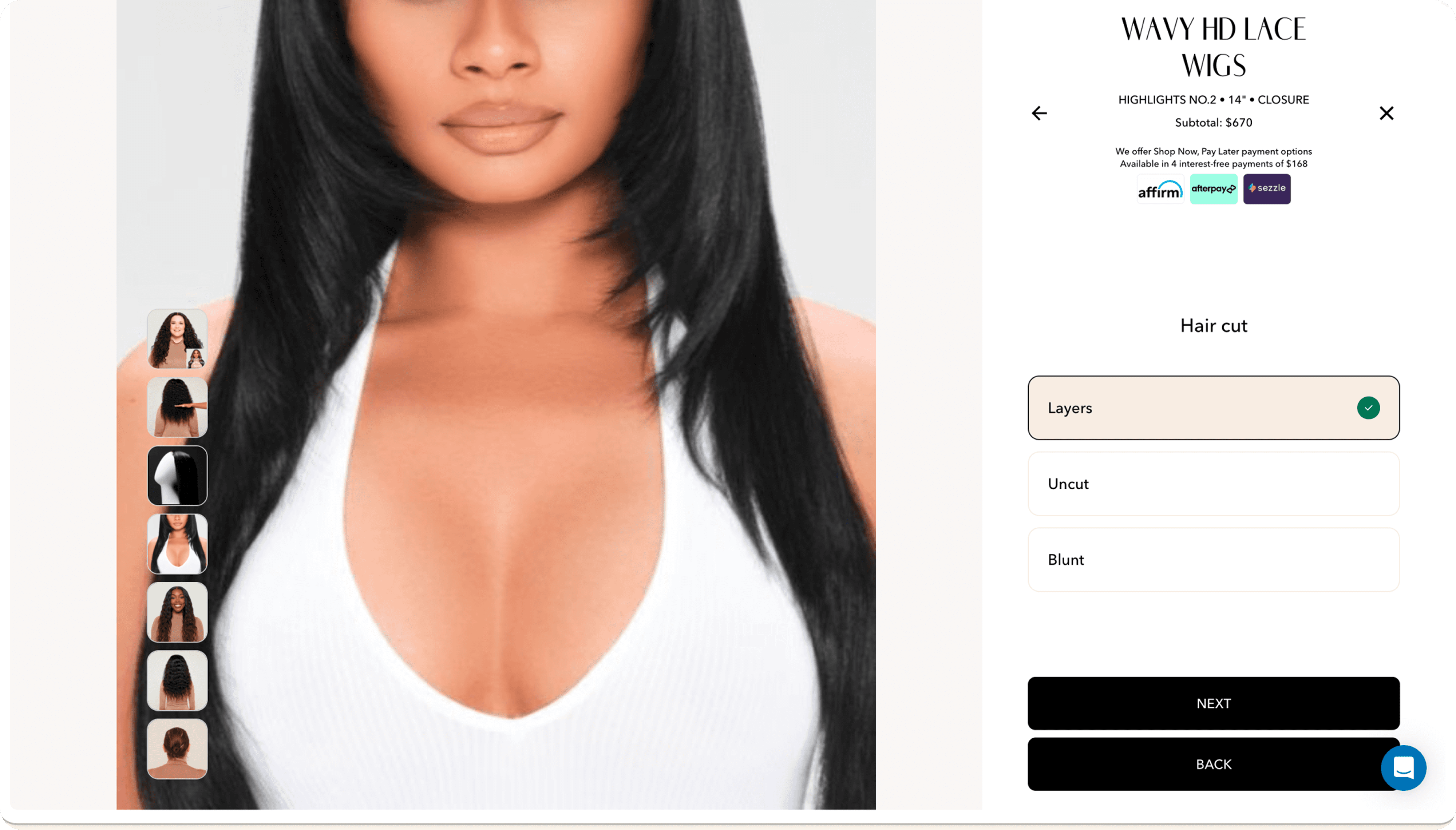

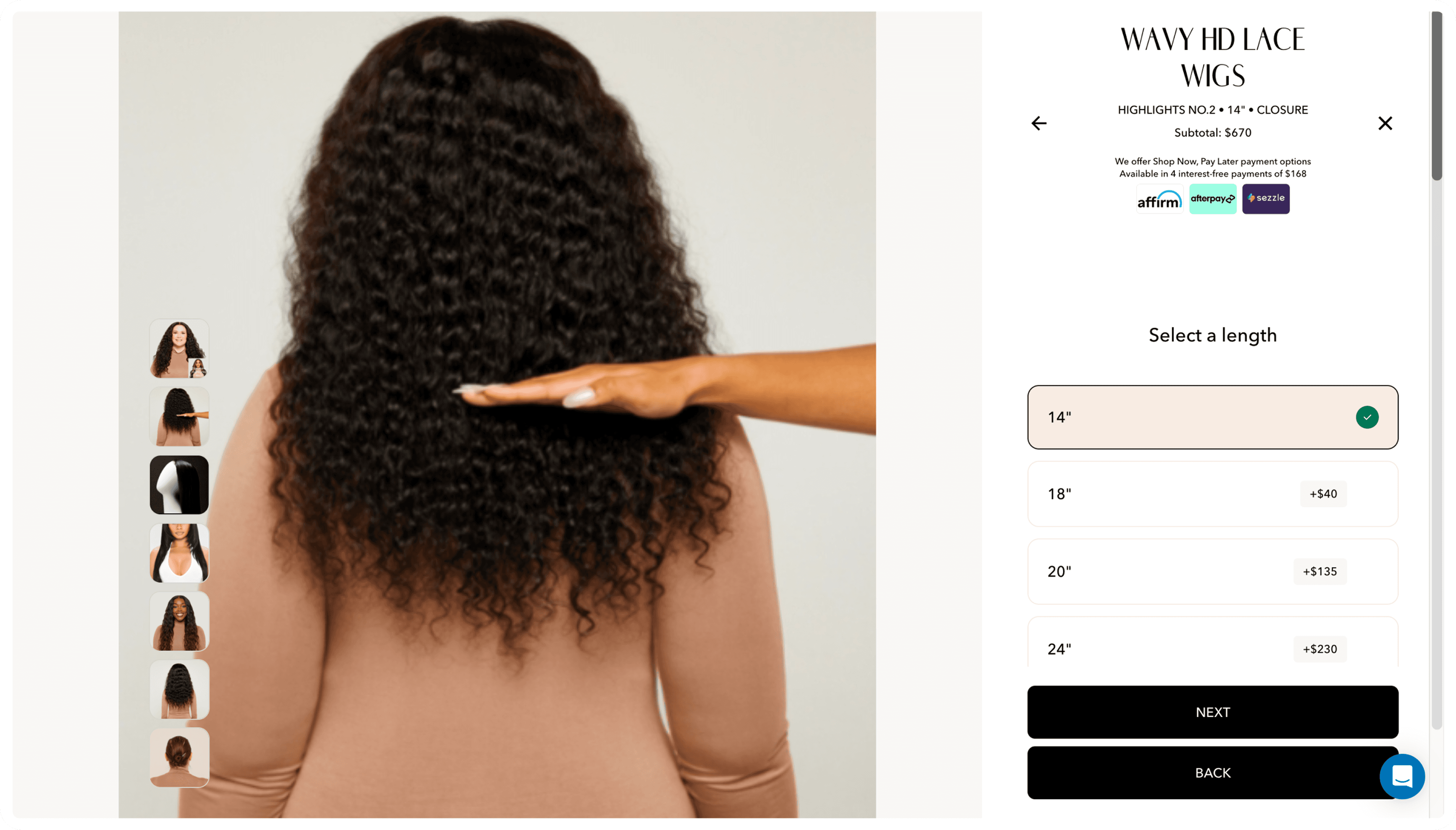

The second major shift was in customization. The existing flow made it difficult to picture the final wig. The experience was fragmented, visuals were static, and customers had to mentally assemble the result from separate choices. In the concept, I made customization more immediate: changes appeared directly on the model, pricing updated in context, and the wig could be inspected from multiple angles. The goal was to move the experience closer to “what you see is what you get,” which felt essential in a category where purchase confidence matters so much.

From these 4 screens in the existing customization flow, it is difficult to imagine what the final wig will look like. My test participant experienced the same issue.

From there, I extended the idea into try-on. Static product photography could only go so far, especially when customers kept asking how a wig would look on them specifically. I explored multiple directions, including AR and avatar rendering, and recommended an avatar-based try-on for web. Because the scan already generated the necessary data, it felt like the most practical path forward and a much better long-term experience.

The prototype ended up doing more than visualize a concept. It gave the team a clearer product path and gave investors confidence that Parfait could deliver it. The work helped support a $5M bridge round, contributed to a strategic partnership with Ulta Beauty, and introduced six new features, three of which moved onto Parfait’s 2025–2026 roadmap. Several smaller improvements from the project also made their way onto the live site.

What stayed with me from Parfait was how much stronger the product became once the story stopped being about AI and started being about the person using it. The most persuasive version of the future was not the most technical one. It was the one that made the customer’s reality feel understood.